📂 projects/kappa/

Kappa Programming Language

Similar to my other software projects, this was worked on quite a while ago, primarily in the summer of '23.

As per usual with my software projects, this can be found on GitHub.

I continued my journey of making everything from scratch into the world of compiler design. I got to a point in developing Chik engine where I wanted to make a scripting language, so that users could define game functionality in scripts, rather than hardcoded C. The intent of this was to provide an interface similar to that of Garry's Mod, where one game executable can play all sort of user-created multiplayer content.

Anyways, the syntax of the programming language looks something like this:

f32: cos_approx(f32: t) { return (9.86960440109 - 4.0*t*t) / (9.86960440109 + t*t); };

f32: cos(f32: t) {

if t < 0.0 do t = 0.0 - t;

while t > 6.28318530718 do t = t - 6.28318530718;

if t < 1.57079632679 do return cos_approx(t);

return 0.0 - cos_approx(t - 3.14159265359);

};

I aimed to make the syntax very much like C, with some modern touch ups. More importantly, this language provides some quality of life features that C does not.

I wanted to make this language very easy to compile, with the most concise parsing loop possible.

Unfortunately, C made some interesting design choices that make the parsing loop a little convoluted,

most notably the pointer assignment operator. In the case of *ptr = 0xdeadbeef;, the = operator

expects a variable on the left to assign the expression on the right to. But yet the C compiler

accepts *ptr as a literal, and also as something assignable. Additionally, the unary operator parsing

isn't the most straightforward, with expressions like -a - -b requiring special care. This is why you

see lines like return 0.0 - cos_approx(t - 3.14159265359); instead of return -cos_approx(t - 3.14159265359);.

Those are just some of the struggles I ran into while writing the compiler, and I'm sure if I stared long enough at it, I could design the programming language around these issues, but for now this is what the language will provide.

How it works

The plaintext is tokenized, then compiled into a tree, and then the tree is assembled into the intermediate represenation. I decided to also create a homemade assembly-like intermediate representation to ease some of the computation required when compiling, and instead offload it onto the interpreter/assembler, where those tasks may end up being trivial. I was able to make the tokenizer code very concise:

/*

* Tokenizes a KAPPA source file.

*

* @param const char *source The source to tokenize.

*/

_k_token_t *_k_tokenize(const char *source) {

int idx = 0;

int line = 1;

int col = 1;

int ti = 0;

_k_token_t *tokens = (_k_token_t*)0x0;

do {

_k_skip_whitespace(source, &idx, &line, &col);

tokens = realloc(tokens, (ti + 1) * sizeof(_k_token_t));

if (tokens == (_k_token_t*)0x0) return (_k_token_t*)0x0;

tokens[ti].line = line;

tokens[ti].column = col;

tokens[ti].index = idx;

tokens[ti].tokenable = _k_deduce_token_type(source, idx);

tokens[ti].str = _k_parse_token(source, tokens[ti].tokenable, &idx, &line, &col);

} while (tokens[ti++].tokenable->type != _K_TOKEN_TYPE_EOF);

return tokens;

}

And by defining the tokens in a header, and creating some small helper functions, this functions rapidly tokenizes any input plaintext.

Example token definition:

const _k_tokenable_t _tokenables[] = {

{_K_TOKEN_TYPE_UNKNOWN, (const char*)0x0, _K_TOKEN_TERMINATABLE_UNKNOWN},

{_K_TOKEN_TYPE_EOF, "\0", _K_TOKEN_TERMINATABLE_SINGLE},

{_K_TOKEN_TYPE_IDENTIFIER, "abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ0123456789_", _K_TOKEN_TERMINATABLE_MULTIPLE},

...

Now, the tokens go directly into the compiler:

switch (token->tokenable->type) {

case _K_TOKEN_TYPE_IDENTIFIER:

case _K_TOKEN_TYPE_NUMBER: { _k_compile_literal(&node, token); break; }

case _K_TOKEN_TYPE_NEWEXPRESSION:

case _K_TOKEN_TYPE_NEWSTATEMENT:

case _K_TOKEN_TYPE_NEWINDEX: { _k_compile_context(&node, token); break; }

case _K_TOKEN_TYPE_ENDEXPRESSION: { _k_compile_end_expression(&node, token); break; }

case _K_TOKEN_TYPE_SEPARATOR: { _k_compile_separator(&node, token); break; }

case _K_TOKEN_TYPE_ENDSTATEMENT: { _k_compile_end_statement(&node, token); break; }

case _K_TOKEN_TYPE_ENDINDEX: { _k_compile_end_index(&node, token); break; }

case _K_TOKEN_TYPE_ENDLINE: { _k_compile_endline(&node, root, &token, out); break; }

case _K_TOKEN_TYPE_KEYWORD: { _k_compile_keyword(&node, token); break; }

case _K_TOKEN_TYPE_ASSIGNMENT:

case _K_TOKEN_TYPE_OPERATOR:

case _K_TOKEN_TYPE_DECLARATOR: { _k_compile_operator(&node, token); break; }

}

And then the tree is given to the assembler, which generates the intermediate representation:

/*

* Compiles a binary operation.

*

* @param _k_token_t *token The token to compile.

* @param int *r The register to compile to.

* @param FILE *out The output file.

*/

void _k_assemble_bin_op(_k_token_t *token, int *r, FILE *out) {

if (strcmp(token->str, "<") == 0) { fprintf(out, "\tlesrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, ">") == 0) { fprintf(out, "\tgrerr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "<=") == 0) { fprintf(out, "\tleqrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, ">=") == 0) { fprintf(out, "\tgeqrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "==") == 0) { fprintf(out, "\tequrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "+") == 0) { fprintf(out, "\taddrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "-") == 0) { fprintf(out, "\tsubrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "*") == 0) { fprintf(out, "\tmulrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, "/") == 0) { fprintf(out, "\tdivrr: r%d r%d r%d\n", *r - 1, *r - 1, *r); --*r; }

if (strcmp(token->str, ",") == 0) { fprintf(out, "\tpushr: r%d\n", *r); --*r; }

}

If all has gone well, you will get some intermediate representation that looks like this:

cos:

poprr: r1

newsv: f32 t

saver: t r1

loadr: r1 t

movrf: r2 0.0

lesrr: r1 r1 r2

cmprd: r1 0

jmpeq: S0

movrf: r1 0.0

loadr: r2 t

subrr: r1 r1 r2

saver: t r1

Then, this can go to the interpreter for runtime.

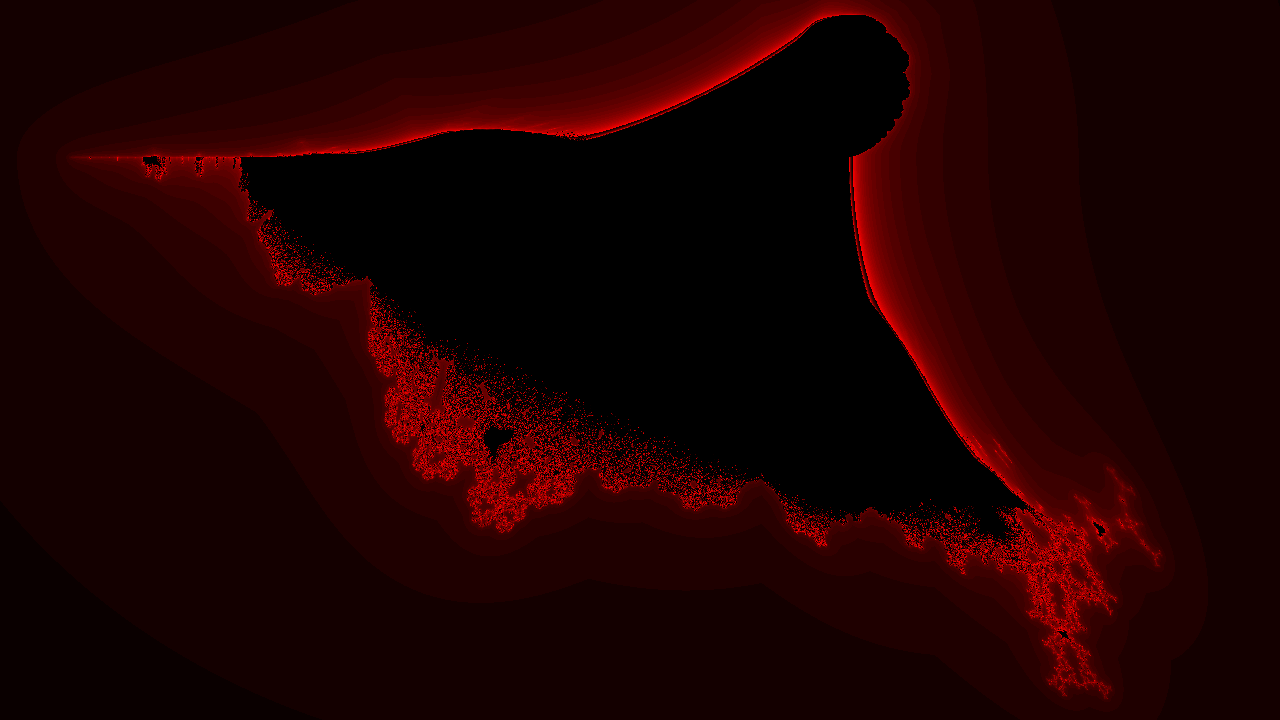

Fractal rendering

The first code I wrote for this programming language outside of the math library, was code to compute fractals. Here is the code that had functions called from in the interpreter environment over each pixel to render the fractal:

f32: rmin() {

return 0.0 - 2.3;

};

f32: rmax() {

return 1.0;

};

f32: imin() {

return 0.0 - 1.5;

};

f32: imax() {

return 1.5;

};

f32: z(f32: re, f32: im) {

f32: tempr = *re;

f32: tempi = *im;

*re = (tempr * tempr) - (tempi * tempi);

*im = 2 * tempr * tempi;

return 0.0;

};

The output fractal looks like this:

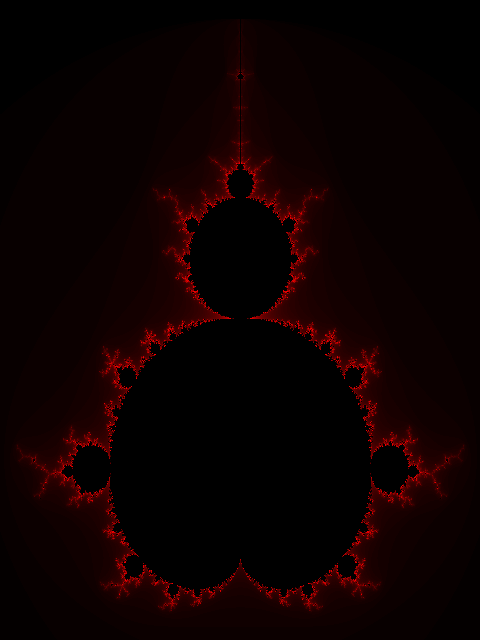

The nice thing about the interpreted language is that I can make a quick function for extracting magnitudes from complex numbers, and then I can make the burning ship fractal quickly: